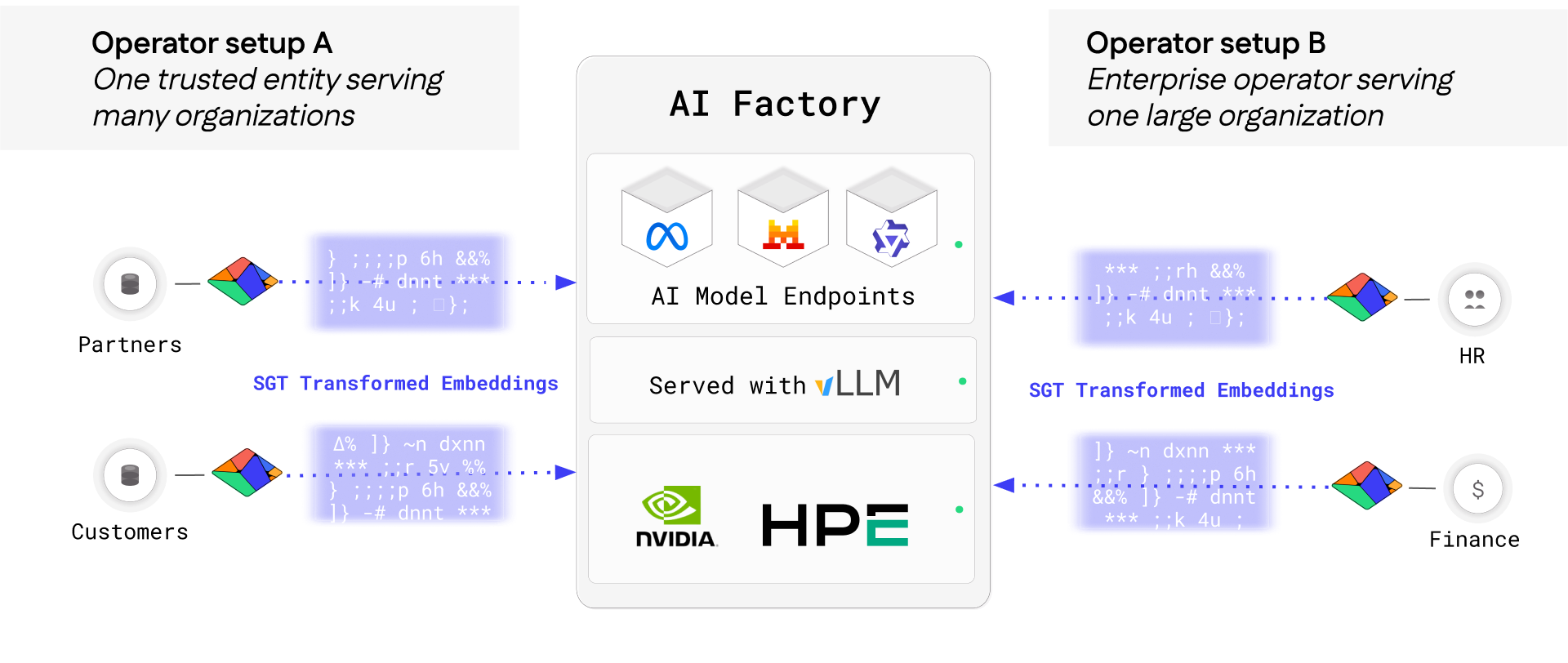

Private Inference for Multi-Tenant AI Factories

Eliminate GPU carveouts. Run every workload on shared infrastructure without exposing tenant data.

Trusted and Proven

Why Protopia for Financial Services

Protopia’s SGT complements AWS infrastructure with a drop-in privacy layer that uses stochastic transformation to protect proprietary data, IP-sensitive content, and other critical enterprise assets, mitigating the risk of data leakage.

Built for speed, sovereignty, and scale, Protopia + AWS enable private inference without compromising performance or infrastructure efficiency.

Model Agnostic

No retraining needed; compatible with leading open-weight LLMs

No performance Trade-offs

Milliseconds of latency; preserves full accuracy

Accelerated Compliance

Supports privacy-by-design, audit, and localization

Deploy Models Fast

900~15,000x times faster than alternative cryptographic approaches

Latency

Milliseconds of added latency, no accuracy loss

Financial services

Validated in global financial institutions

Architecture Overview

Protopia Stained Glass Transform:

Private-by-Default Multi-Tenant Inference

Stained Glass Transform (SGT) converts plaintext inputs into stochastic embeddings that preserve model fidelity and performance via an OpenAI-compatible API. Enterprises can quickly deploy SGT as a lightweight privacy layer on AWS-hosted inference, eliminating data exposure risks without compromising performance.

LEARN MORE

Secure Financial Use Cases

From fine-tuning to inference, Protopia’s Stained Glass technology secures sensitive inputs at every stage of model development and deployment. Explore how Protopia supports safe, scalable LLM adoption.

AI-as-a-Service

Safely analyze real-time transactions for AML, fraud, or suspicious activity without exposing customer PII.

Sovereign AI

Process sensitive onboarding documents and regulatory submissions in full compliance with privacy mandates.

Enterprise

Protect internal analytics, trading strategies, and research assets while leveraging advanced AI without moving data outside controlled environments.

Model Training

Securely orchestrate data between agents, apps, and endpoints without risking leaks or losing control.

Model Validation

Enable secure chatbots, virtual assistants, and automated workflows using account data and client communications.

THE SOLUTION

The Protopia Solution

Protopia’s Stained Glass Transform (SGT) solves the fundamental risk in AI for finance by eliminating plaintext exposure entirely. Sensitive data is transformed client-side, so no readable information is ever sent to the model or hosting environment. SGT works wherever your data resides: on-premises, in your private cloud, or within region-specific environments. This helps you comply with residency rules and internal controls.

Protopia + AWS: private inference at scale

Power Private Inference on AWS at Scale

Protopia’s SGT complements AWS infrastructure with a drop-in privacy layer that uses stochastic transformation to protect proprietary data, IP-sensitive content, and other critical enterprise assets, mitigating the risk of data leakage.

Built for speed, sovereignty, and scale, Protopia + AWS enable private inference without compromising performance or infrastructure efficiency.

Unlock New Use Cases. Activate Your Proprietary Data.

Enable secure, private inference on proprietary data that was previously too sensitive to use. Ship POCs to production without compromising privacy or control.

Hit Performance Goals. Protect Your IP.

Achieve high-throughput inference on proprietary datasets without infrastructure changes. Protopia’s SGT integrates directly with AWS endpoints, preserving privacy in shared compute environments.

Deploy Models Fast. Expand Model Access.

Protopia runs on AWS without custom infrastructure or refactoring. Protect Llama 3.1B today, preserving fidelity and metadata. More model support coming soon.

LEARN MORE

Try Private LLM Inference Today with Your AWS Credits

Test real-world LLM use cases in your AWS sandbox — no training or infra setup needed.

Use your AWS credits to explore secure inference use cases with Protopia’s Stained Glass Transform.

Proprietary Risk and

Research Models

Protect internal analytics, trading strategies, and research assets while leveraging advanced AI without moving data outside controlled environments.

Agentic

Workflows

Securely orchestrate data between agents, apps, and endpoints without risking leaks or losing control.

Customer Service and Onboarding AI

Enable secure chatbots, virtual assistants, and automated workflows using account data and client communications.

Ready to secure your sensitive financial data in AI workflows?