It’s Not a Matter of If, It’s How Badly You’ll Be Hacked

“There are two types of companies: those who have been hacked, and those who don’t yet know they have been hacked.” – John Chambers, World Economic Forum, 2015

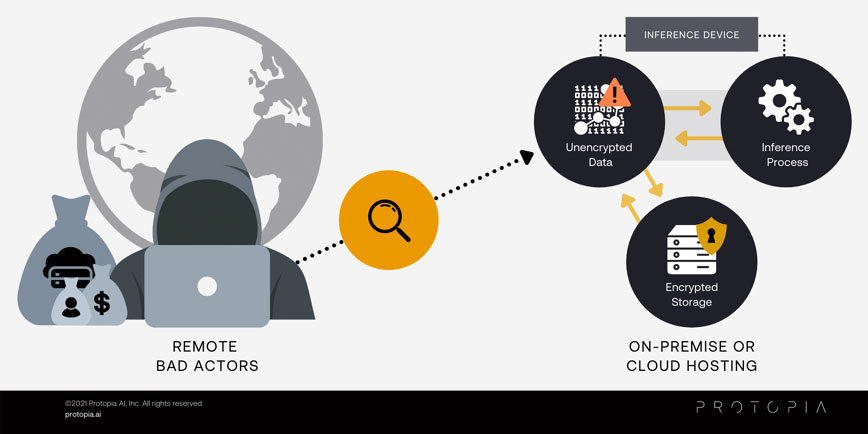

A global army of hackers is targeting business’ data, and your company is in their crosshairs. These hackers are motivated by monetizing the data your company owns and taking it hostage with ransomware. It’s only a matter of time until your company is targeted too. The more sensitive data you’re dealing with, the more they will extort you. While experts point to encryption as the best defense against exposure of critical information, they become silent when addressing Artificial Intelligence (AI) security. Since data is unencrypted during AI’s prediction process (a process called AI inference), that data will be exposed when an attacker gains privileges to read memory. As such, since the most important part of AI is performed on unencrypted data, there is a structural gap that encryption alone does not cover.

Knowing that there are hundreds of thousands of bad actors out there actively targeting your business, many falsely believe that where the inference process takes place is the most important determinant for securing their data; nothing could be further from the truth.

On-premises infrastructure provides a false sense of security, primarily through proximity/visibility to infrastructure and applications, but how can your small security team stay on top of every new attack vector? In 2020 alone, 18,000 published common vulnerabilities and exposures (CVEs) is a prime indicator of the ever growing nature of attacks (NIST NVD ANALYSIS 2020). When a hacker gets access inside your network, it is often machine-level root access, allowing them to not only see your sensitive data but also cover their tracks on the way out.

According to IBM, it takes on average of 206 days before a breach is even identified and another 73 days to contain it, for a total of 279 days. This means a hacker that has obtained access to an inference device has the ability to see all of your sensitive, unencrypted data moving through the inference process (as depicted by the diagram below) for an average of 9 months or more. The SolarWinds hack showed that even some of the largest and savviest technology companies like Cisco Systems, Deloitte, Intel, and Nvidia, can all fall victim to major on-premises attacks, along with countless state and federal government organizations.

It takes on average of 206 days before a breach is even identified and another 73 days to contain it,

for a total of 279 days.”

(IBM Security: Cost of a Data Breach Report, 2019)

With such a large liability exposure from on-premises AI, many see cloud providers as the answer to this dilemma. While major cloud providers are far better equipped to address security than the average enterprise, at the end of the day a customer’s application/inference server is only as safe as the configuration and software stack deployed by the customer themselves. Cloud providers put instance security in the hands of the individual customers, often resulting in improper configurations. In fact, Gartner believes that up to 95% of all cloud security failures are the fault of the customer, not the cloud provider.

To put a finer point on this situation, the prevalence of machine compromises is the reason there exists an entire market of businesses doing security assessments of a customers’ cloud infrastructure. There are dozens of companies, like CrowdStrike, Randori, and Tessian, that have built entire businesses around reviewing a customer’s cloud applications and pointing out improperly configured instances that expose potential security flaws. Even cloud giant Amazon Web Services sees the opportunity; they will sell you a tool called GuardDuty that enables a customer to look for potential issues from insecurely configured AWS services.

95% of all cloud security failures are the fault of the customer,

not the cloud provider. ” (Gartner)

Location is clearly immaterial to the issue; the fundamental challenge is when data is being used by the inference process itself. Since few, if any, are logging the data fed into inference processes, knowing what may or may not have been exposed and the extent of the breach is impossible. Not knowing the extent of exposure makes it incredibly difficult for an insurance carrier to assess any remediation from an incursion. The best solution to securing the inference process is rendering inference data useless to the attacker in the face of a compromise. And this is what Protopia AI can do for you.

Protopia AI is the only non-obtrusive, software-only solution that delivers confidential inference. By feeding the AI inference process only the data that is essential to the task, Protopia helps ensure that your inference process is limiting the potential exposure from hackers. Through our patented technology, Protopia masks away any information that is not specifically required to complete the inference.

In the example above, the AI process is counting the number of faces, not trying to identify any single person.

- The image on the left shows both the face count (required), and the sensitive identifiable facial features (not required). A hacker viewing this feed would have access to both types of data.

- The masked view on the right still allows the inference task to count the faces but removes the sensitive, identifiable features, leaving a hacker with nothing of value to them and avoiding any liability for your business.

For more details, we’ve prepared a video showing this process and outputs. (Note: there is no audio during the video)

Protopia is flexible enough for on-premises AI or cloud AI; it makes no difference where your inference process is hosted. With Protopia, a business can massively reduce data exposure by only delivering the features in each data record needed by the inference process.

If you enjoyed this blog, you may also want to read: